AI Investment ROI: Real Data From a 9-Developer Team

TL;DR: A 9-developer team spent $428 in one day on AI coding tools across 46 sessions. Two developers accounted for 73% of the spend, but with completely different usage patterns. One ran 26 exploratory sessions across multiple repos. The other concentrated $150 into 7 deep sessions in a single repo. Token spend alone tells you nothing. Session-level AI investment ROI tracking shows you how each developer uses AI as a tool and whether that spend is actually producing results.

Everyone wants to know if their AI investment is paying off. Engineering leaders ask it in every planning meeting. CFOs ask it in every budget review. And the honest answer from most teams is: “We think so, but we can’t really prove it.”

That’s not because the ROI isn’t there. It’s because nobody is measuring it properly.

What does $428/day in AI spend actually produce?

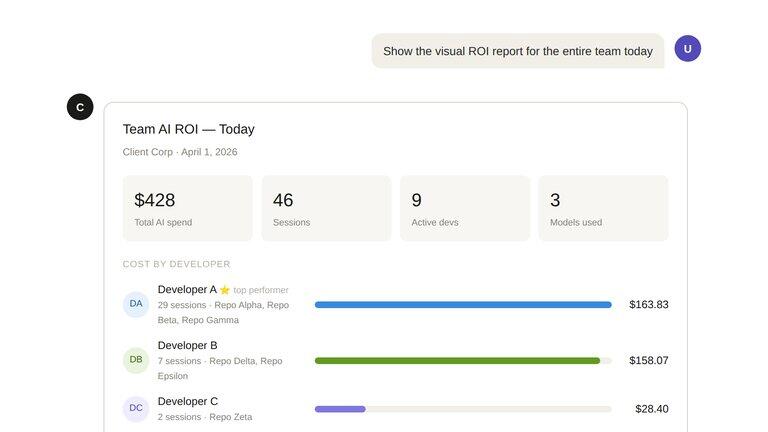

We pulled a real report from a production team. Nine developers, one day, $428 in total AI spend across 46 coding sessions. Three models in the mix: Claude Opus 4.6, Claude Sonnet 4.6, and Claude Haiku 4.5.

Here’s what the raw numbers look like:

Click to view full size. Real production data, anonymized. Generated by Cogniscape.

Click to view full size. Real production data, anonymized. Generated by Cogniscape.

If you stopped at the invoice, you’d see $428 and ask “is that worth it?” But that question is almost meaningless without context. Worth it compared to what? For how much output? Spread across how many people doing what kind of work?

This is the core problem with measuring AI investment ROI in software teams. The spend is easy to track. The value is not.

What the spending patterns actually reveal

The most interesting finding wasn’t the total. It was the distribution.

Developer A and Developer B together accounted for 73% of the day’s AI spend. But their patterns looked nothing alike.

Developer A ran 26 sessions across 3 repositories, mixing models throughout the day. This looks like iterative, exploratory work: trying approaches, switching context, using cheaper models for quick tasks and Opus for the heavy lifting. Total spend: $163.

Developer B concentrated $150 into just 7 sessions, almost entirely in a single repository. One Opus session alone cost around $80. This looks like deep, focused work: a complex feature or refactor that required sustained reasoning from the most capable model.

A standup or a PR list would show both developers as “equally active.” The AI ROI data shows something fundamentally different about how each one uses AI as a tool.

Neither pattern is wrong. But if you’re trying to understand your AI investment ROI, you need to know this difference exists. A team where everyone works like Developer A has different cost dynamics than one where people work like Developer B. And a team where some developers barely use AI at all (Developers E through I spent under $20 each) might have an adoption gap worth investigating.

Why token spend is a terrible ROI metric

Most teams that try to measure AI coding ROI do it backwards. They start with token spend and try to justify it. “We spent $12,000 on AI last quarter, so it better be worth it.”

But token spend is an input metric. It tells you what you consumed, not what you produced. It’s like measuring developer productivity by looking at how much coffee the team drinks.

Real AI investment ROI requires connecting spend to outcomes:

- How many features were shipped with AI assistance?

- How many bugs were caught and fixed during AI sessions?

- What technical decisions were made, and were they good ones?

- How much developer time was saved compared to doing the same work manually?

The session-level data in that report starts to make this possible. When you can see that Developer B’s $80 Opus session happened in a single repository, you can go look at what that session actually produced. Maybe it was a complex database migration that would have taken two days manually. Maybe it was a rabbit hole that produced nothing. The point is: now you can check.

With Cogniscape, you can drill down further. You can check the ROI of each individual pull request, or zoom out and see the ROI of an entire saga — a chain of related sessions, PRs, and issues that together form a complete feature or initiative. That’s where the real picture comes together: not just “how much did this session cost?” but “how much did it cost to build this entire feature, and was it worth it?”

The model mix matters more than you think

The report shows an interesting model distribution: 67% of spend on Opus ($254), 31% on Sonnet ($154), and 2% on Haiku ($10). But session counts tell a different story: 12 Opus sessions vs. 40 Sonnet sessions vs. 5 Haiku sessions.

That means Opus sessions cost roughly 5x more per session than Sonnet ones. Are they 5x more valuable? Sometimes yes, sometimes no. The point is that you can’t answer that question without session-level tracking.

Teams that optimize their AI investment ROI learn which tasks actually benefit from the most expensive models and which ones do just fine with Sonnet or Haiku. That kind of optimization doesn’t come from looking at a monthly bill. It comes from understanding what each session accomplished relative to its cost.

What “good” AI ROI looks like

Based on production data we’ve seen across teams, here’s a rough framework for thinking about AI investment ROI:

The math is straightforward. If a developer spends $50/day on AI tooling and it saves them 2 hours of work, that’s a good deal. Senior developer time costs $75-150/hour depending on market. So $50 in AI spend replacing $150-300 in developer time is a 3-6x return.

But the real ROI is in the work that wouldn’t happen otherwise. The documentation that never gets written. The edge cases that never get tested. The refactoring that never gets prioritized. AI agents make this work economically viable for the first time. That’s harder to measure but often more valuable than pure time savings.

The hidden cost is in sessions that produce nothing. An $80 session that goes in circles is worse than no session at all, because it also consumed developer attention. Visibility into session outcomes is how you catch this pattern before it becomes a habit.

How to actually measure AI ROI for your team

If you want to move beyond “we spent X on tokens” and actually understand your AI investment ROI, you need three things:

-

Per-developer, per-session cost tracking. Not aggregated monthly bills. Individual sessions tied to specific repositories and work items.

-

Session outcome visibility. What did each session actually produce? Plans, decisions, code changes, bug fixes. This is what AI coding observability is built to capture. Without it, you’re just staring at a cost spreadsheet.

-

Baseline comparison. How long would this work have taken without AI? This is always an estimate, but experienced engineers can usually give a reasonable range.

Cogniscape generates reports like the one above automatically. It captures session-level data from AI coding tools, correlates it with GitHub activity, and surfaces the patterns that matter: who’s spending what, on which repositories, with which models, and what those sessions actually produced.

Want to see this in action? Our homepage has a live chat demo powered by real Cogniscape development data. Ask it questions like “What caused the 95% data loss incident?” and watch the AI reconstruct the full story from actual events.

Try the live demo →The teams that measure will win

AI coding tools are only getting more capable and more expensive. The gap between teams that understand their AI investment ROI and those that don’t will keep growing.

The ones that measure will know which developers need training, which tasks benefit from expensive models, and where AI spend is actually producing value. The ones that don’t will keep guessing, and eventually, someone will ask them to justify the line item.

If you want to see what this looks like for your team, book a 30-minute briefing and we’ll walk through real data from production teams. Or explore the Cogniscape documentation to understand how session-level AI ROI tracking works under the hood.

AI investment ROI: common questions

How do you calculate AI coding ROI?

AI investment ROI for coding tools is calculated by comparing the cost of AI sessions (token spend, model costs) against the value produced: developer time saved, features shipped, bugs fixed. The key is session-level tracking, not aggregated monthly bills. You need to connect individual AI sessions to specific work outcomes to get a meaningful number.

Is $428/day a lot for a 9-person team?

That’s about $47 per developer per day. For context, a senior developer costs $600-1,200/day in salary and benefits. If AI tooling saves each developer even one hour per day, the ROI is strongly positive. The question isn’t whether $47/day is a lot. It’s whether each developer’s AI sessions are producing value relative to their cost.

What’s the difference between token spend and AI ROI?

Token spend is an input metric: how much you consumed. AI ROI is an outcome metric: how much value that consumption produced. Many teams track token spend and call it ROI measurement. It’s not. Real ROI requires connecting spend to results: features delivered, time saved, decisions made. Session-level observability bridges that gap.

Do I need special tooling to measure this?

You need session-level visibility into AI coding tool usage, tied to developer identity and repository context. Cogniscape provides this automatically by capturing data from AI coding sessions and correlating it with GitHub, Linear, and other engineering tools. Without this kind of tooling, most teams end up with nothing more than an API billing page.